|

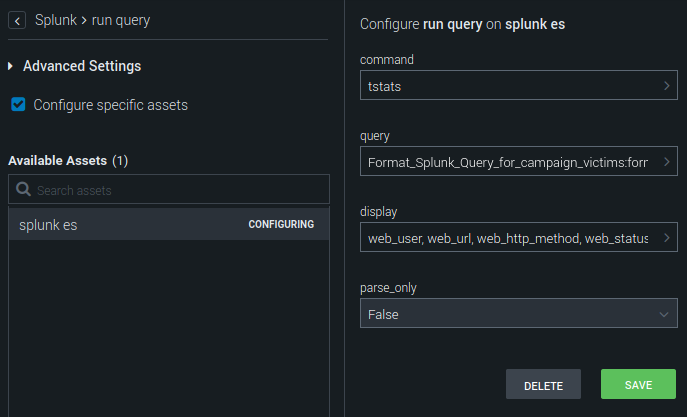

What caught my attention was the use of walklex command to discover search terms.Īfter understanding how Splunk determines what is a term or not, it's easy to guess that not all values we pass along to TERM() directive will work. Nevertheless, I'm still not leveraging PREFIX() in a production use case -yet- but this will come handy at some point I'm pretty sure. Why am I mentioning that?Īfter watching this awesome talk, I had an idea for updating the "IOC Scanner" dashboard I've been deploying to pretty much every single customer since 2017 while working as a contractor. conf is a must for Splunk developers and analytics engineers: TSTATS and PREFIX. In the past, I've had the pleasure of working with many super talented folks while a member of Splunk PS, one of them is Richard Morgan. The simple (but still complicated) answer is: not all keyword traces will make into a Splunk term containing only the searched keyword itself. So why not searching everything in Splunk using tstats and term()? Besides the significant change in terms of UX, there are more caveats. I even suggest a simple exercise for quickly discovering alert-like keywords in a new data source: | tstats count where index=any TERM(exploit) by index, sourcetype, _time span=1d | eval matches="exploit" | append | append | append | append | append | append | append | append | append | append | stats sparkline, sum(count) AS event_count, values(matches) AS macthes by index, sourcetype The following read is going to provide you a good idea about them in case you are not familiar yet:Īs a quick example, below is a query that will provide back as a result all index and sourcetype pairs containing the word (term) 'mimikatz': | tstats count where index=* TERM(mimikatz) by index, sourcetype I'm not going into the details about tstats and term(), just assume those will basically enable you to run faster queries. Here's where query optimization comes in handy. Until those datasets are prepared or optimized, Splunk content engineers need to leverage other means to get the job done. For instance, account brute-force detection and privileged access reports will tap into authentication telemetry. Some use cases share similar target data. You not only need to know how data is going to be consumed (use cases) but also what's the overall cost (maintenance, performance impact). The fact is data preparation takes time.Įvaluating what's the best approach (summary index, accelerated DMs, etc) is super hard. Long term solution: Data Preparationĭealing with large scale datasets or indexes most times requires a tremendous work on data analysis, not different from the challenges our data science colleagues face in a daily basis. Today we focus on a query-level technique. There are a few overlooked ones not even seasoned Splunkers know about… Splunk provides various methods for speeding up search results, from summary indexing, to metrics indexes, to accelerated data models (DMs). I've been thinking about writing this one for a while and since the infamous Log4j vulnerability was published along with many IOC lists, I thought this would be a good time for it. So you better provide analytics & interfaces for them to perform their job properly while avoiding performance issues and more importantly: false-negatives. Security Operations teams will search for IOCs against Splunk events- using good or badly designed queries - you want it or not.

What if even after mapping all potential fields the query is still running super slow? This is the problem we are going to approach in this post. When you have an IP address, do you map all data sources that might contain a valid IP address entry? What if a field is not CIM compliant, how do you add this into your scope? Out-of-the-box data models cannot help you here. Leveraging Splunk terms by addressing a simple, yet highly demanded SecOps use case. TL DR: tstats + term() + walklex = super speedy (and accurate) queries.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed